| Leveraging Stereopsis for Saliency Analysis |

| Yuzhen Niu1,

Yujie Geng1,2, Xueqing Li2, and Feng Liu1 |

1Computer Science

Department, Portland State University

2Computer Science Department, Shandong University |

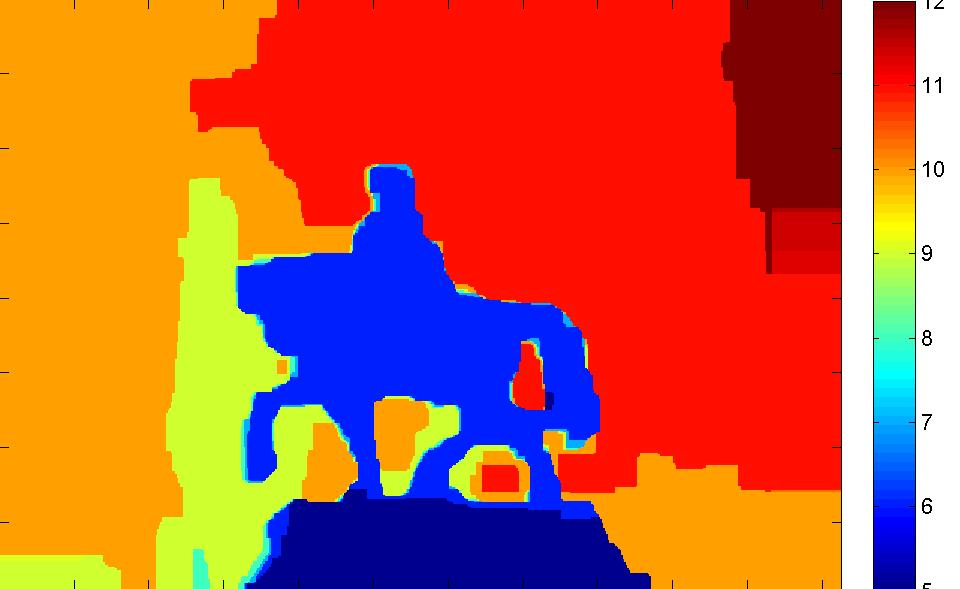

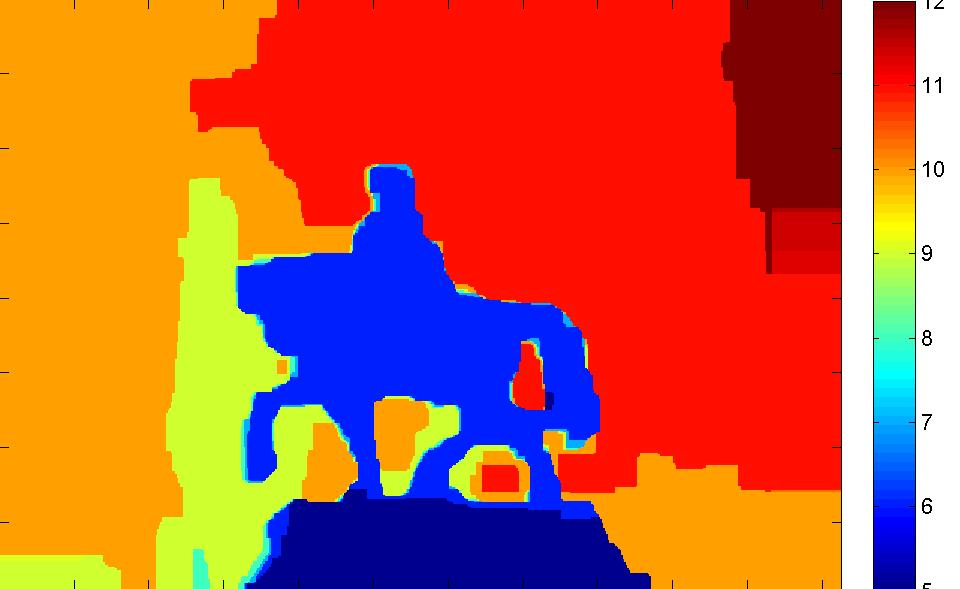

input stereo image (left and right) |

disparity map

stereo saliency |

|

The input image

is used here with the permission from Flickr user "Wagman". |

| Abstract |

| Stereopsis provides an additional depth cue and plays an

important role in the human vision system. This paper explores

stereopsis for saliency analysis and presents two approaches

to stereo saliency detection from stereoscopic images.

The first approach computes stereo saliency based on

the global disparity contrast in the input image. The second

approach leverages domain knowledge in stereoscopic photography.

A good stereoscopic image takes care of its disparity

distribution to avoid 3D fatigue. Particularly, salient

content tends to be positioned in the stereoscopic comfort

zone to alleviate the vergence-accommodation conflict. Accordingly,

our method computes stereo saliency of an image

region based on the distance between its perceived location

and the comfort zone. Moreover, we consider objects

popping out from the screen salient as these objects tend

to catch a viewer’s attention. We build a stereo saliency

analysis benchmark dataset that contains 1000 stereoscopic

images with salient object masks. Our experiments on this

dataset show that stereo saliency provides a useful complement

to existing visual saliency analysis and our method

can successfully detect salient content from images that are

difficult for monocular saliency analysis methods. |

| Paper |

Yuzhen Niu, Yujie Geng, Xueqing

Li, and Feng Liu. Leveraging Stereopsis for Saliency Analysis.

IEEE CVPR 2012. PDF

Related Paper

Long Mai, Yuzhen Niu, and Feng

Liu. Saliency

Aggregation: A Data-driven Approach.

IEEE CVPR 2013.

Project

PDF

Long Mai and Feng Liu. Comparing

Salient Object Detection Results without Ground Truth.

ECCV 2014. |